If you've been using AI tools for any length of time, you've probably hit a confusing moment. Maybe your chatbot suddenly "forgot" something important from earlier in the conversation. Or you opened Photoshop's Generative Fill and noticed a credit counter ticking down. Perhaps you glanced at an API pricing page and saw costs listed per "1K tokens."

When we interact with AI, we think in words and images. The AI thinks in tokens and credits. Understanding these two concepts makes it far easier to get better results and keep an eye on costs.

Part 1: How Text AI Actually "Reads"

When you type a prompt into a text-based AI like Claude, ChatGPT, or Gemini, it doesn't read your sentences the way a human does. Before processing your request, it breaks your text into small digestible pieces.

Those pieces are called tokens.

Simple words: Short, common words like "cat," "run," or "the" are often just one token.

Complex words: Longer or rarer words get split up, so "unbelievable" might become three tokens.

Punctuation and spaces: Commas, periods, and other symbols can also count as tokens, depending on how the model's tokeniser works.

A useful rule of thumb: 1,000 tokens equals roughly 750 English words.

The Context Window: Why AI Forgets

Every AI model has what's called a "context window." Think of it as a short-term memory bucket with a hard limit on how many tokens it can hold at once.

Everything from your conversation has to fit inside:

Your original instructions

Every question you've asked

Every answer the AI has given back

When you're deep into a long conversation or you've pasted in a massive document, this bucket fills up. Once it's full, the model has to discard the oldest tokens to make room for new ones. That's why your AI might suddenly ignore a rule you set at the beginning of the chat. It's not being stubborn, it's just run out of room.

Input vs Output: The Cost of Thinking

From a billing perspective, not all tokens are created equal. Most API pricing separates input tokens from output tokens.

Input tokens (reading): What you send in: prompts, documents, instructions.

Output tokens (writing): What the AI generates: summaries, code, emails.

Output tokens typically cost more than input tokens because generating new text requires more computation than simply reading and encoding it. This isn't universal, but it's a safe working assumption: longer requested outputs usually cost more on paid APIs.

Part 2: The Visual Artist: Credits

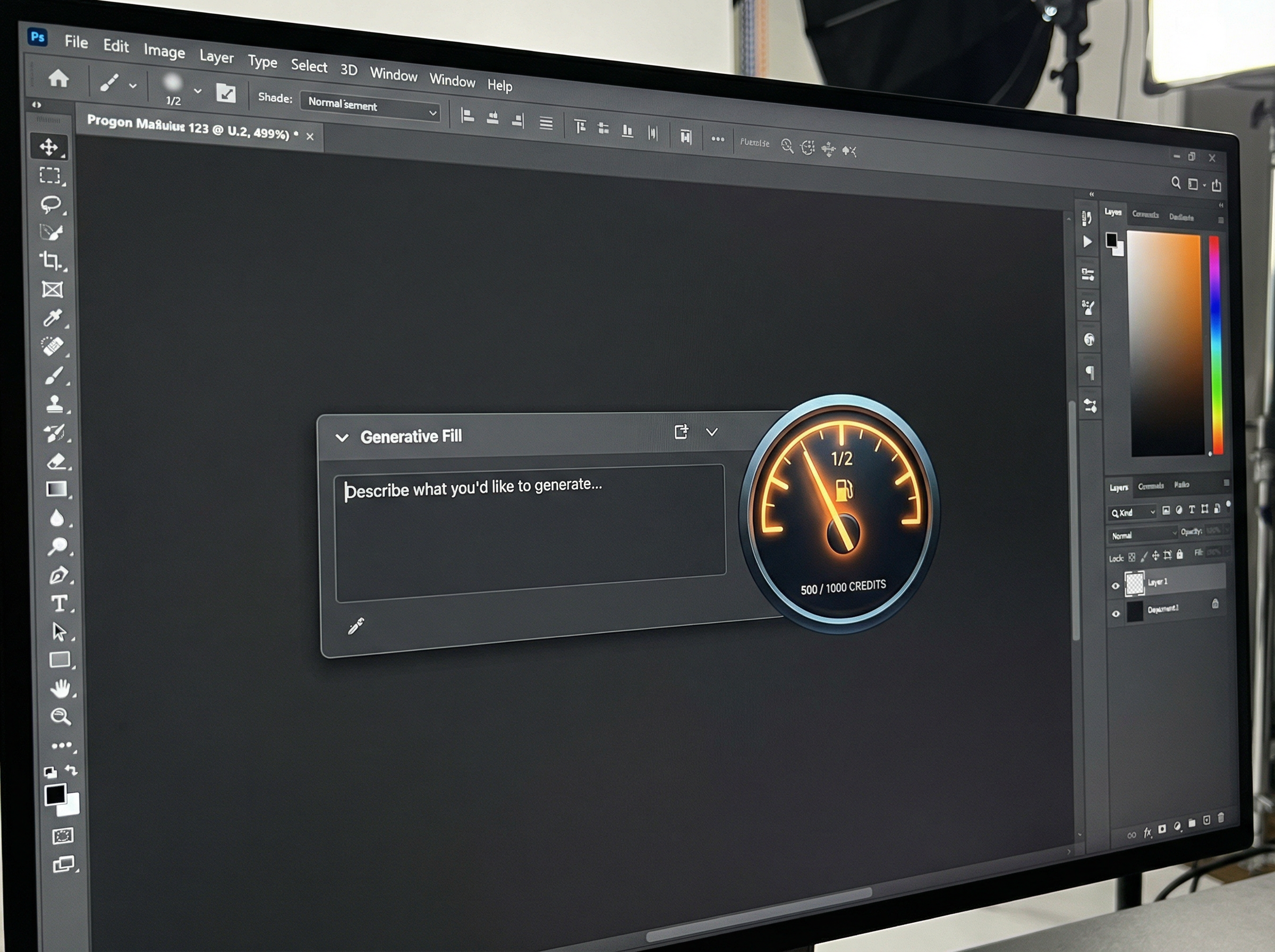

Text models run on tokens, but what about Photoshop's Generative Fill or image tools like Midjourney and Flux? These systems might use tokens behind the scenes, but what you see is usually a simpler "per-generation" approach.

Here's a helpful analogy: when you ask a text model to write an essay, it's like a taxi meter that keeps running until you reach your destination. When you ask for an image, you're ordering a fixed item: "one 1024×1024 image." So billing becomes straightforward: one generation, one charge.

How Adobe Uses Generative Credits

Photoshop's Generative Fill and other Firefly-powered features run on "Generative Credits." Your Adobe plan gives you a monthly allowance, and each generative action consumes part of that allowance.

Most standard features use one credit per generation.

More intensive features or very large outputs can consume multiple credits, according to Adobe's rate cards.

The length of your prompt usually doesn't affect the credit cost. What matters is the type of operation and sometimes the output size.

Other visual platforms work similarly, often charging different amounts based on resolution or quality settings, since bigger, higher-quality images demand more computing power.

Why Some AI Uses More "Juice"

Depending on what you're doing, you can burn through your limits faster than expected.

For text AI (tokens):

Long inputs: Pasting a 50-page transcript into your prompt can devour a huge chunk of both your context window and token budget before the model even starts responding.

Long outputs: Asking for a detailed, multi-page answer consumes far more output tokens, and therefore more compute and money, than requesting a tight one-paragraph summary.

For image AI (credits):

Quantity: Generating 10 variations costs roughly 10 times as many credits as generating one image, because each generation is its own job.

Resolution and complexity: Higher resolutions or video-like outputs often consume more credits per job, reflecting the extra server work required.

The Takeaway

You don't need to be a mathematician to use AI well, but understanding tokens and credits makes you a far better pilot.

If your text AI starts getting confused or ignoring earlier instructions, you've likely pushed past its context window. Try trimming or summarising earlier content to free up space. If you're worried about image costs, invest time in a clear, targeted prompt and sensible resolution settings. Get what you need in as few generations as possible, rather than brute-forcing dozens of variations.

Master these invisible currencies, and you'll get better results while keeping your costs under control.