Mention HDR to most photographers, and they immediately picture the overprocessed, crunchy trend from 2010 or complex, multi-exposure bracketing. True HDR is different. It uses a single image to unlock the actual brightness and tonal capabilities of modern screens.

This step-by-step guide covers how to edit, export, and share true HDR images using Lightroom Mobile, Desktop, or Classic.

Step 1: Check Your Screen Compatibility

Before editing, you need to know if your device can actually display high dynamic range.

Many modern screens (like iPhones, iPads, and MacBook Pros) support it, but standard monitors do not.

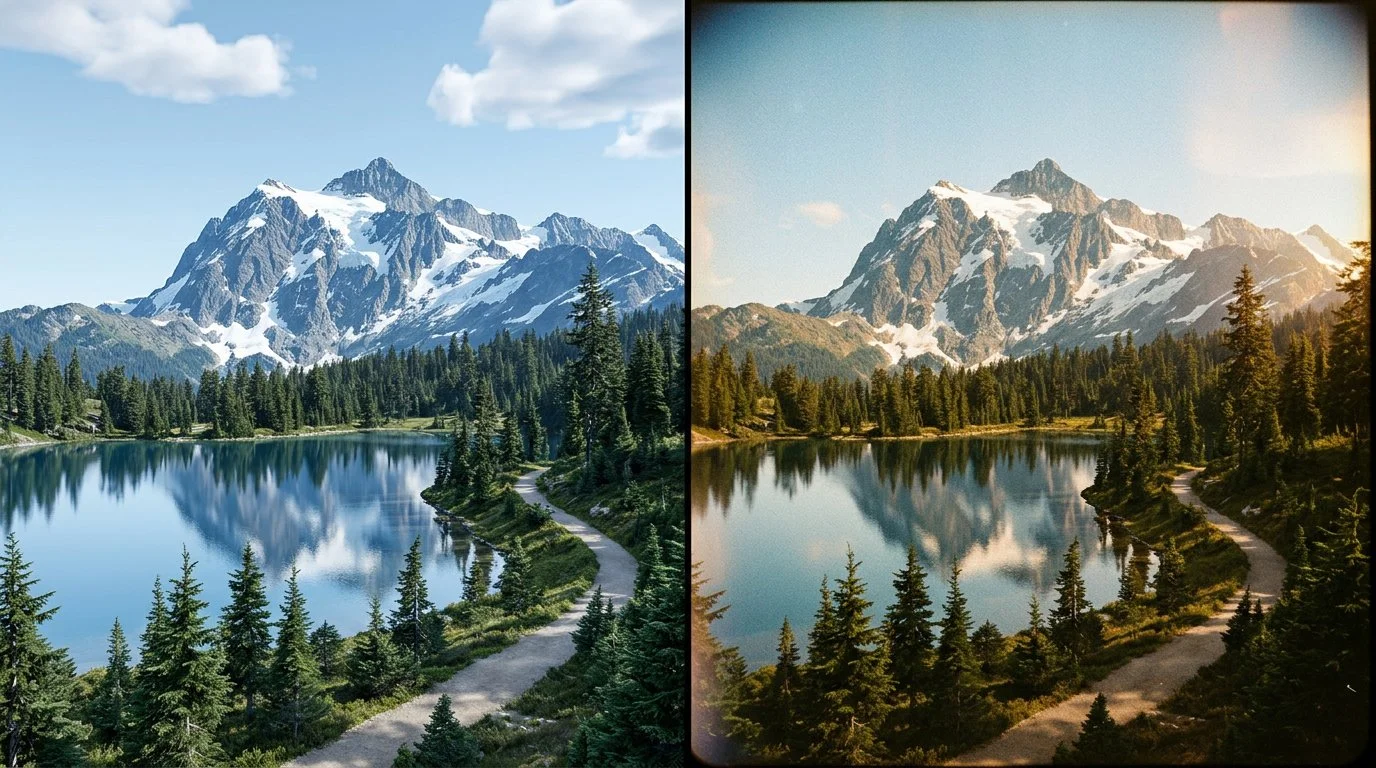

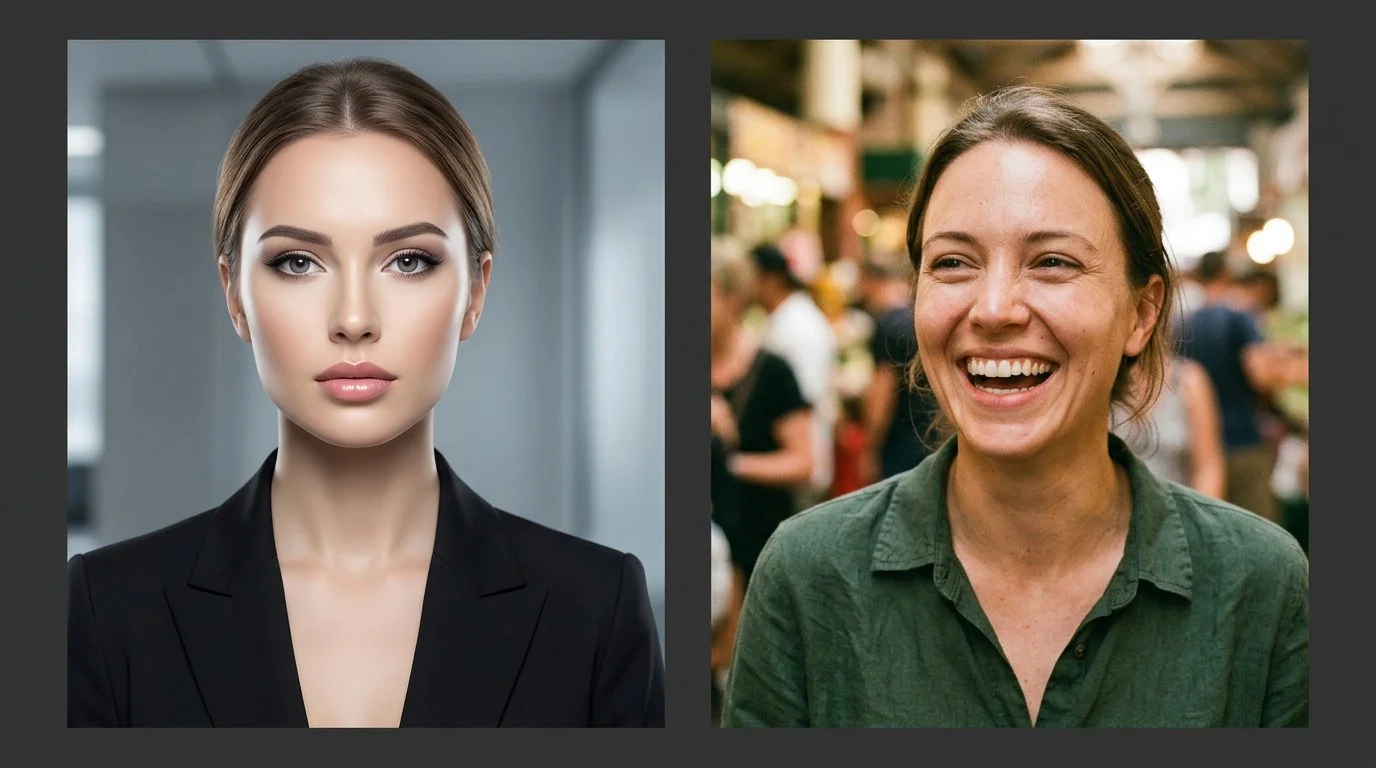

If you view an HDR compatibility test page and see two distinct versions of the comparison images, your screen is ready for HDR editing.

Step 2: Edit Your Base Image (SDR)

Start by editing your photo exactly how you normally would. Tweak the exposure, contrast, and colours until you are completely happy with the standard dynamic range (SDR) version. Photos with naturally high contrast and bright highlight areas work best for this process.

Step 3: Enable the HDR Panel

Locate and toggle the HDR button in the Lightroom edit panel.

The image will instantly become brighter, and your histogram will expand to the right, showing extra sections. These sections represent the additional stops of light available exclusively for HDR displays.

Step 4: Control Your Highlights

To keep the image looking natural and intentional, you need to manage the extra brightness.

Stick to the limit: Adobe sets a default HDR limit of around 2.3 stops. Keeping it here ensures your image translates well across different devices.

Check for clipping: Hold your finger down on the screen while adjusting the exposure slider (or hold Alt/Option on desktop). The screen will turn yellow to show safe HDR highlights, and red if you push them too far.

Visualize HDR: Toggle this feature on to see a colour-coded map of your highlights, helping you stay within safe tonal boundaries.

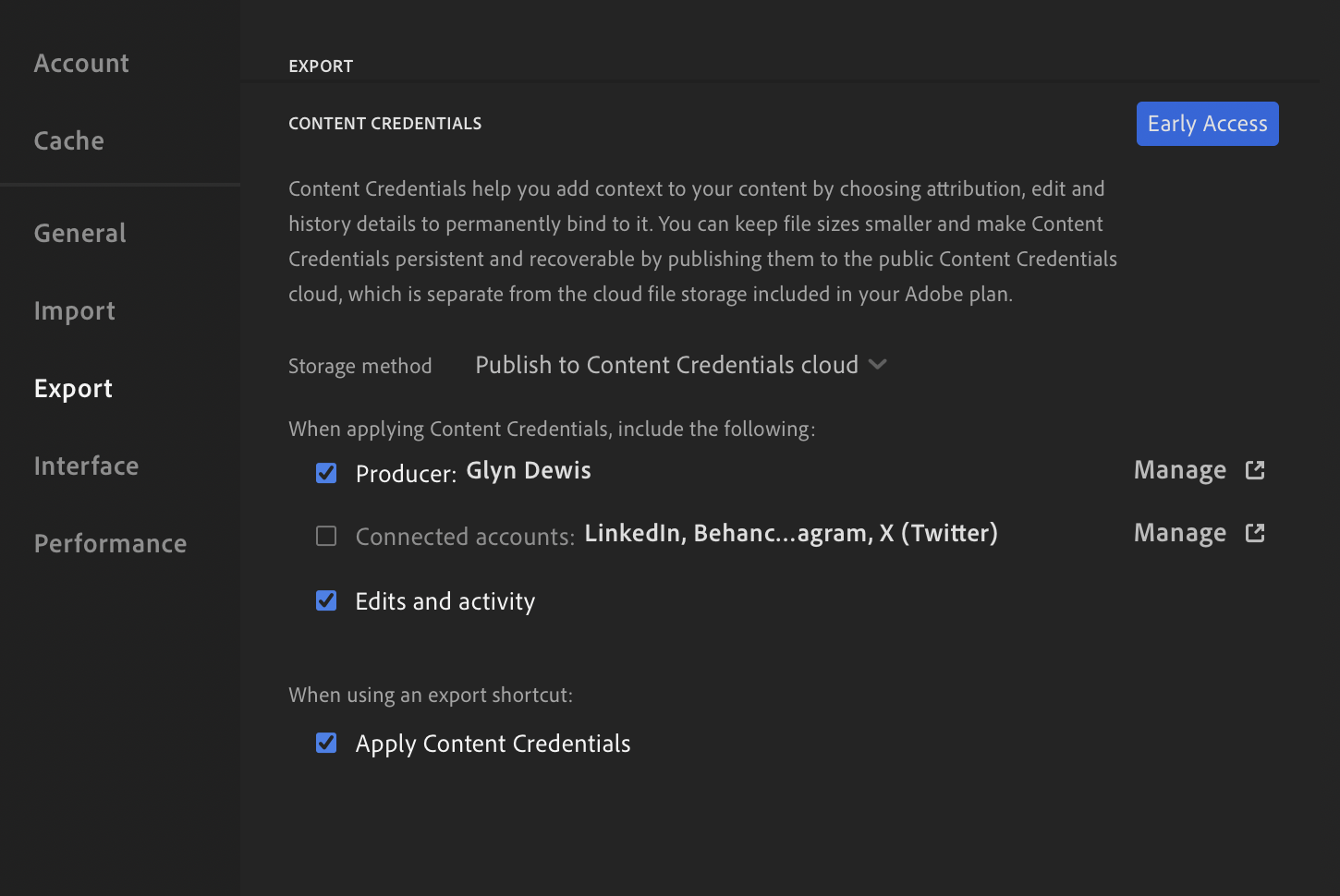

Step 5: Export with the Right Settings

To ensure Instagram and web browsers can read your HDR data, use these specific export settings:

File Type: Select AVIF (or JXL).

Color Space: Choose Display P3 (or HDR P3 on desktop).

HDR Output: Ensure this toggle is turned ON.

Step 6: Post to Instagram Safely

When sharing your final image to social media, keep these two rules in mind to avoid rendering glitches:

No stickers or text: Adding music to your post is fine, but do not overlay native Instagram text or stickers onto the image, as it breaks the HDR rendering.

Use the Carousel Trick: Share both the standard SDR version and the new HDR version in a single carousel post. Allowing users to swipe between the two creates a massive, undeniable visual impact.