Removing reflections from glasses has always been one of those jobs in Photoshop that's either felt impossible or just painfully tedious. In this tutorial, I'm showing you how the new Firefly Image Model 5 in the Photoshop Beta handles this specific problem in a way that I think you're going to find really useful.

I'm working with a portrait of Thomas Coulter, one of the veterans from my 39-45 Portraits Project, to walk you through exactly how it works.

The Challenge with Older Models

If you've tried using Generative Fill for this before, you'll know that older models like Firefly Image 1 could certainly remove a reflection, but they often introduced other problems at the same time. You'd sometimes end up with subtle changes to facial structure, eyebrows, or the shape of the glasses frames themselves. The reflection might be gone, but the portrait no longer looked quite right.

Why Firefly Image Model 5 is Worth Knowing About

Model 5 has been built with detail preservation as a priority. The idea is that it only changes what you've asked it to change, leaving everything else as close to the original as possible.

Worth knowing: this is a premium model, so it uses 10 generative credits rather than one. It also only produces a single variation, but given the quality of the result, that's rarely a problem.

How to Do It, Step by Step

Open Photoshop Beta - You'll need the Beta version to access the latest Firefly models. [00:56]

Make your selection - Use the Selection Brush Tool to paint over the reflections on the lenses. You don't need to be overly precise; going slightly over the frames is fine. [01:25]

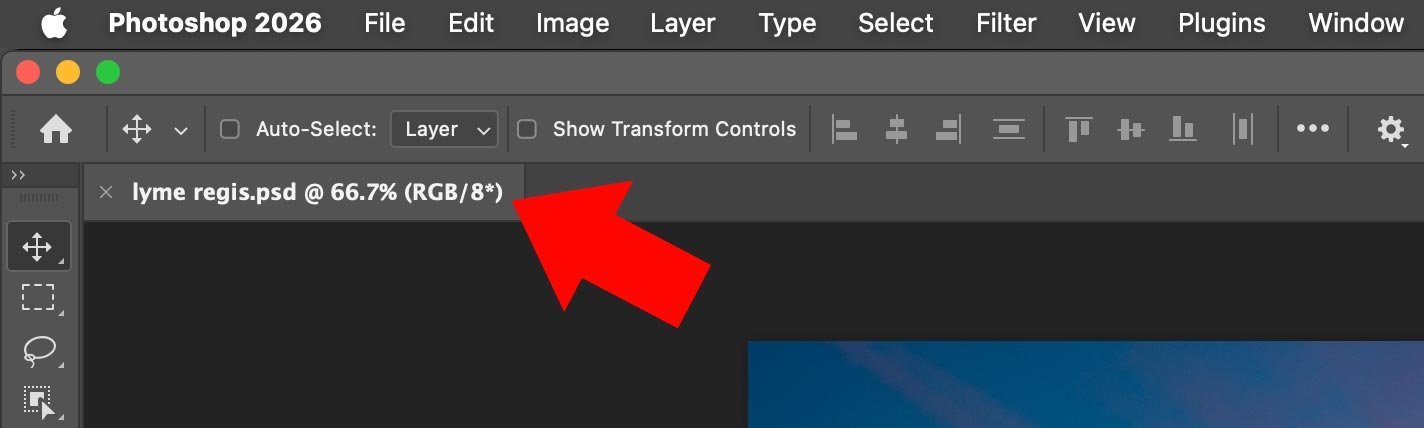

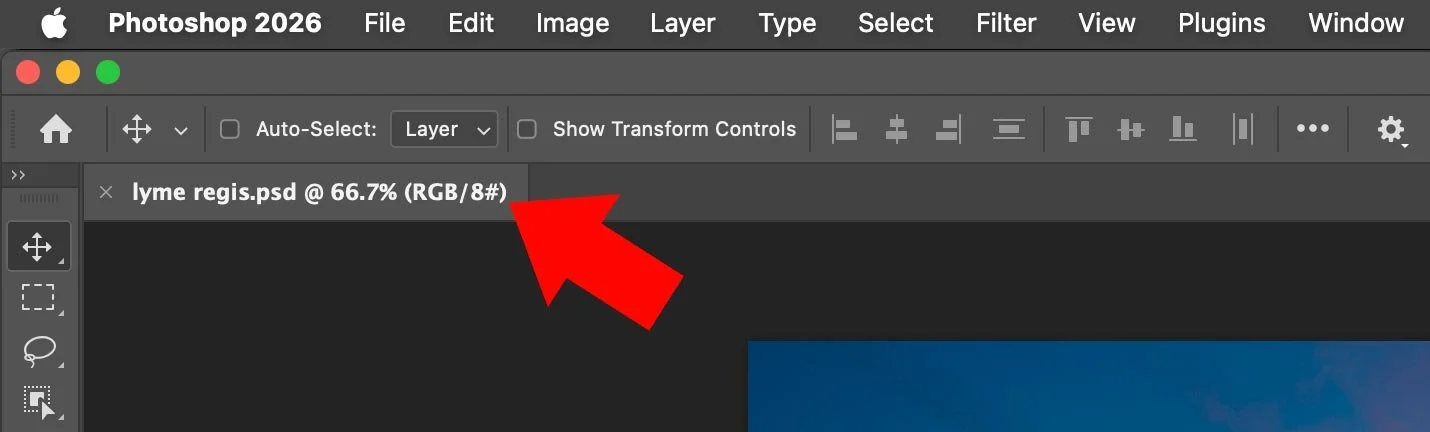

Open Generative Fill - Click Generative Fill in the Contextual Taskbar. If you can't see it, go to Window > Contextual Taskbar. [01:49]

Choose the right model - This is the key step. In the Taskbar settings, look under Adobe Models and select Firefly Image Model 5 (Preview). [06:16]

Enter your prompt - Something simple like "remove the reflection from the glasses" is all you need. [05:32]

Generate - Hit Generate and give it around 10 to 12 seconds. [06:21]

The Results

What I find genuinely impressive here is that once the reflection is gone, everything else stays exactly as it was. The eyebrow hairs, the skin texture, the precise shape of the frames - all identical to the original file.

Now, Camera Raw and Lightroom do have reflection removal tools built in, and they're well worth trying, particularly on larger reflections. But for detailed areas like eyewear, where precision really matters, this approach in Photoshop gives you a level of control and accuracy that's hard to beat. If you've got portraits sitting in your archive that you've written off as too difficult, this is a good reason to dig them back out.