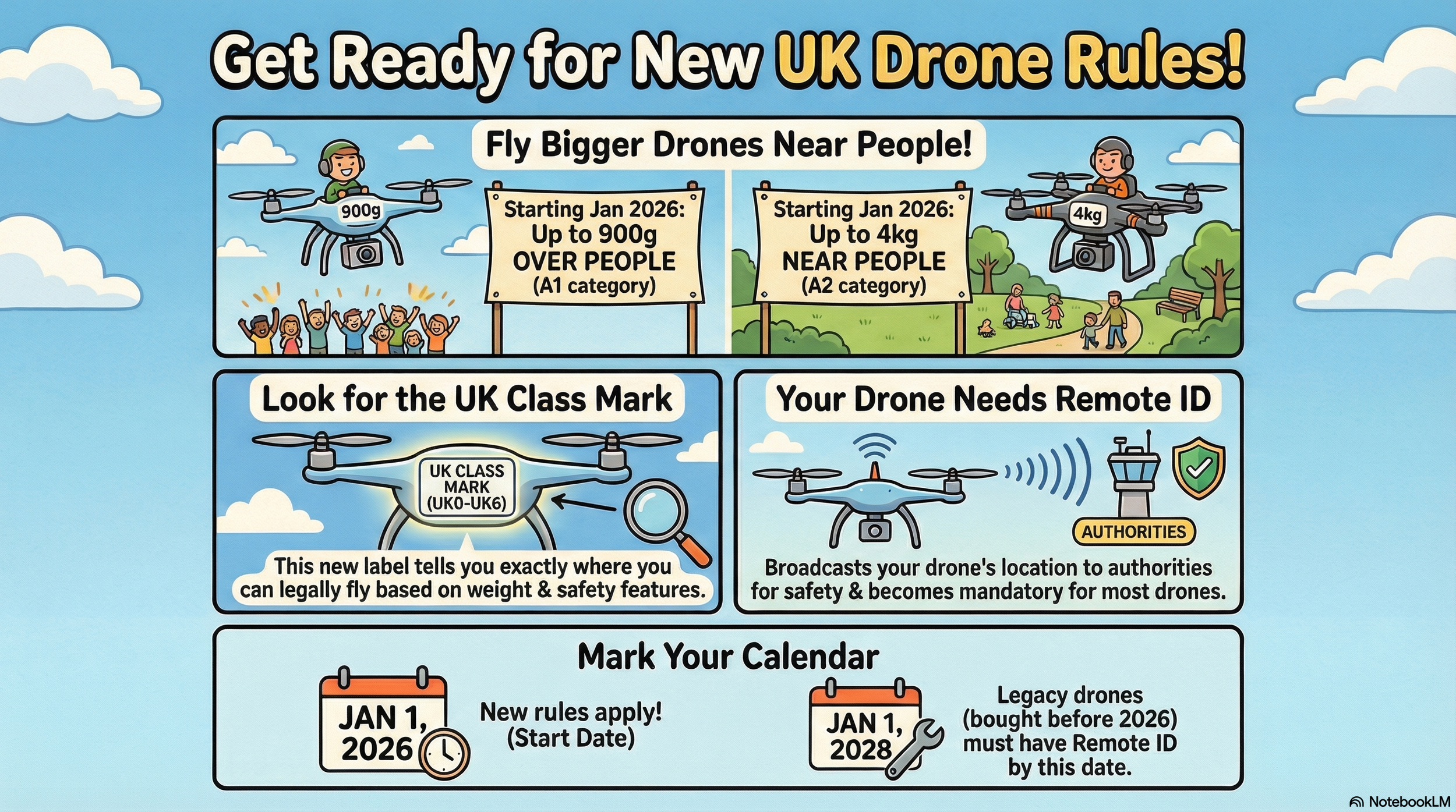

It looks like some big updates are coming to the UK drone scene from 1 January 2026, especially around how drones are classed, identified, and registered. Here is a revised, plain‑English version that reflects the latest CAA guidance.

1. New UK class marks

From 1 January 2026, most new drones sold in the UK for normal hobby and commercial flying will carry a UK class mark from UK0 to UK6. This mark shows what safety standards the drone meets and which set of rules apply.

UK0: Very light drones under 250g, including many small “sub‑250” models.

UK1–UK3: Heavier drones intended for typical Open Category flying, with increasing levels of safety features as the class number goes up.

UK4: Mostly used for model aircraft and some specialist use.

UK5 & UK6: Higher‑risk drones designed for more advanced or specialist operations, usually in the Specific Category.

EU C‑class drones:

If you already own an EU C‑marked drone, it will continue to be recognised in the UK until 31st December 2027, so you can keep flying it under the transitional rules until then.

2. Remote ID – your “digital number plate”

Remote ID (RID) is like a digital number plate for your drone: it broadcasts identification and flight information while you are in the air. This helps the CAA, police and other authorities see who is flying where, and pick out illegal or unsafe flights.

From 1st January 2026

Any UK‑class drone in UK1, UK2, UK3, UK5 or UK6 must have Remote ID fitted and switched on when it is flying.

From 1st January 2028 (the “big” deadline)

Remote ID will also be required for:

UK0 drones weighing 100g or more with a camera.

UK4 drones (often model aircraft) unless specifically exempted.

Privately built drones 100g or more with a camera.

“Legacy” drones (no UK class mark) 100g or more with a camera.

What RID does (and does not) share:

It broadcasts things like your drone’s location, height and an identification code (serial/Operator ID), plus some details about the flight.

It does not broadcast your name or home address to the general public; it is designed for safety and enforcement, not doxxing pilots.

3. Registration

The UK is tightening registration so that more small camera drones are covered. The key change is that the threshold drops from 250g to 100g for many requirements.

From the new CAA table:

Flyer ID – for the person who flies

Required if your drone or model aircraft weighs 100g to less than 250g

(including UK0), and for anything 250g or heavier.

Operator ID – for the person responsible for the drone

Required if your drone:

Weighs 100g to less than 250g and has a camera; or

Weighs 250g or more, even without a camera.

If your drone is 100–250g without a camera, an Operator ID is optional

(though it is still recommended).

In everyday terms:

If your drone has a camera and weighs 100g or more, you should expect to need both an Operator ID and a Flyer ID.

Sub‑100g aircraft remain outside the legal registration requirement, but the CAA still recommends taking the Flyer ID test for knowledge and safety.

4. Night flying

If you fly at night, your aircraft must now have at least one green flashing light turned on. This makes it easier for other people and aircraft to see where it is and in which direction it is moving.

A2 CofC and how close you can fly

The A2 Certificate of Competency (A2 CofC) still matters for flying certain drones closer to people. Under the new regime:

With an A2 CofC, you can fly UK2‑class drones:

As close as 30m horizontally from uninvolved people in normal operation.

Down to 5m in a dedicated “low‑speed mode” if your drone supports it and you comply with all conditions.

For legacy drones under 2 kg, you should still keep at least 50m away from uninvolved people when using A2‑style privileges under the transitional rules.

Always check the latest CAA drone code for the category you are flying in, as extra restrictions may apply depending on location and type of operation.

5. What you need to do

If you are already flying legally today, you do not need to panic, but you should plan ahead over the next couple of years.

Now–end of 2025

Make sure you have a valid Flyer ID and Operator ID if your drone falls into the current registration thresholds.

From 1st January 2026

When buying a new drone, check that it has the correct UK class mark and built‑in Remote ID if it is UK1, UK2, UK3, UK5 or UK6.

Use a green flashing light when flying at night.

By 1st January 2028

If you own a legacy drone or UK0/UK4 aircraft 100g or more with a camera, ensure you are ready to comply with Remote ID, either through built‑in hardware or an approved add‑on.

If you keep an eye on these dates and make sure your registration, class marks and Remote ID are in order, your current setup should remain usable under the new rules for years to come.